To the delight of those of us enjoying the ride on the anti-power-law bandwagon (bandwagons are ok if it’s a backlash to another bandwagon), Cosma links to a new article in Science, “Critical Truths About Power Laws,” by Stumpf and Porter. Since it’s behind a paywall you might as well go read the Clauset/Shalizi/Newman paper on the topic, and since you won’t be bothered to read the paper, see the blogpost entitled “So You Think You Have a Power Law — Well Isn’t That Special?”

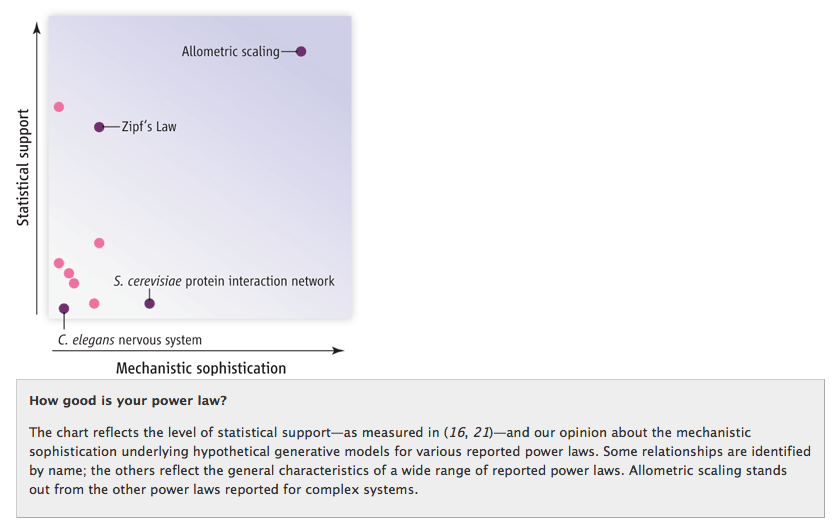

Anyway, the Science article is nice — it amusingly refers to certain statistical tests as “epically fail[ing]” — and it’s on the side of truth and goodness so it should be supported, BUT, it has one horrendous figure. I just love that, in this of all articles that should be harping on deeply flawed uses of (log-log) plots, they use one of those MBA-style bozo plots with unlabeled axes, one of which is viciously, unapologetically subjective:

If there is one power law I may single out for mercy in this delightful but verging-on-scary witch hunt, it would be Zipf’s law, cruelly put a bit low on that “mechanistic sophistication” axis. Zipf’s law has a wonderful explanation as the outcome of a Pitman-Yor process (going back to Simon 1955!), and Clauset/Shalizi/Newman found it was the only purported power law that actually checked out:

There is only one case—the distribution of the frequencies of occurrence of words

in English text—in which the power law appears to be truly convincing, in the sense

that it is an excellent fit to the data and none of the alternatives carries any weight.

Now, it is the case that the CRP/PYP/Yule-Simon stuff is still more of a statistical generative explanation than a deeper mechanistic one; but no one knows how cognition works, there are no satisfying causal stories for linguistic production, and it’s probably fundamentally unknowable anyways, so that’s the best science you can get. yay.

Pingback: The Spamlist! » Cozy Catastrophes

You just invented a new kind type of plot – the ‘bozo plot’. Give it a few months and it will be in the dropdown on Matlab.

I’d like to point out there are some pretty boring (i.e., monkeys and typewriters) mechanistic processes which could also explain Zipf’s law: http://www.nslij-genetics.org/wli/pub/ieee92_pre.pdf

Really?

Ferrer-i-Cancho, R. & Elvevåg, B. (2010). Random texts do not exhibit the real Zipf’s law-like rank distribution. PLoS ONE 5 (3), e9411.

http://dx.doi.org/10.1371/journal.pone.0009411